Introduction

Here’s something that surprises most beginners:

👉 A model can be 95% accurate—and still be completely useless.

Why? Because accuracy alone doesn’t tell the full story.

That’s why understanding accuracy vs precision vs recall is critical in machine learning.

These metrics are widely used in:

- Spam detection

- Fraud detection

- Medical diagnosis

- Search engines

In this guide, you’ll learn:

- What each metric really means (in simple terms)

- How they work step-by-step

- When to use each one

- Real-world examples you can actually relate to

What Is Accuracy vs Precision vs Recall?

Accuracy vs precision vs recall are key machine learning metrics used to evaluate how well a model makes predictions.

- Accuracy measures overall correctness

- Precision measures how often positive predictions are correct

- Recall measures how many actual positive cases are correctly identified

👉 Together, they help you understand not just if a model is right, but how it is right—and where it fails.

Let’s break them down simply.

Accuracy (Overall Correctness)

Accuracy answers this question:

👉 “Out of all predictions, how many were correct?”

Example:

- 100 predictions made

- 90 are correct

- Accuracy = 90%

Precision (Quality of Positive Predictions)

Precision answers:

👉 “When the model predicts YES, how often is it right?”

Example:

- Model predicts 50 emails as spam

- 40 are actually spam

- Precision = 80%

Recall (Coverage of Actual Positives)

Recall answers:

👉 “Out of all real YES cases, how many did the model find?”

Example:

- 100 spam emails exist

- Model catches 80

- Recall = 80%

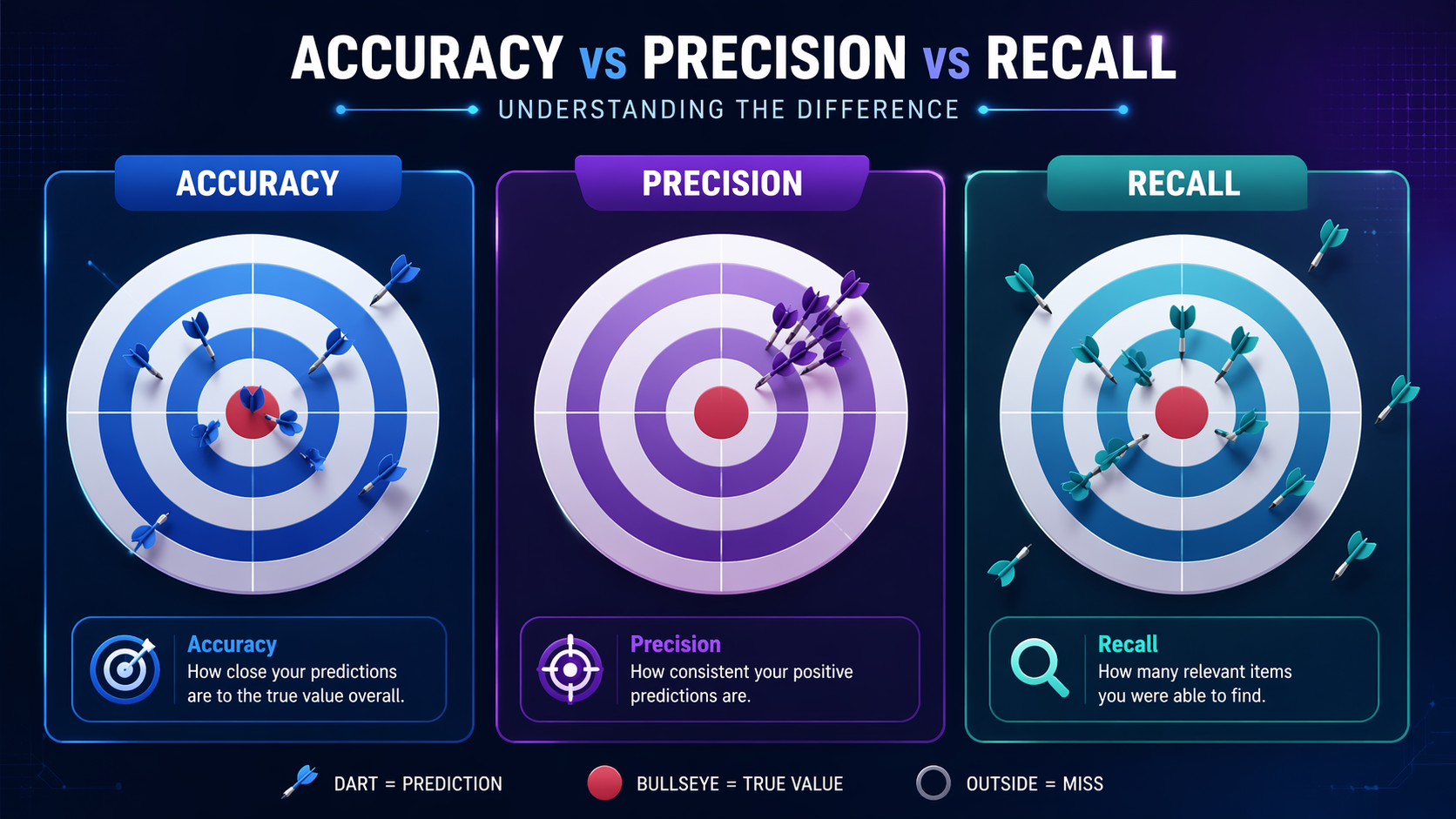

The Intuitive Analogy (This Makes It Stick)

Imagine a dartboard 🎯:

- Accuracy → How close your darts are to the center

- Precision → How tightly grouped your darts are

- Recall → Whether you hit all the targets you were supposed to

👉 You can be:

- Precise but not accurate

- Accurate but not precise

- Or miss important targets entirely (low recall)

How Accuracy vs Precision vs Recall Work (Step-by-Step)

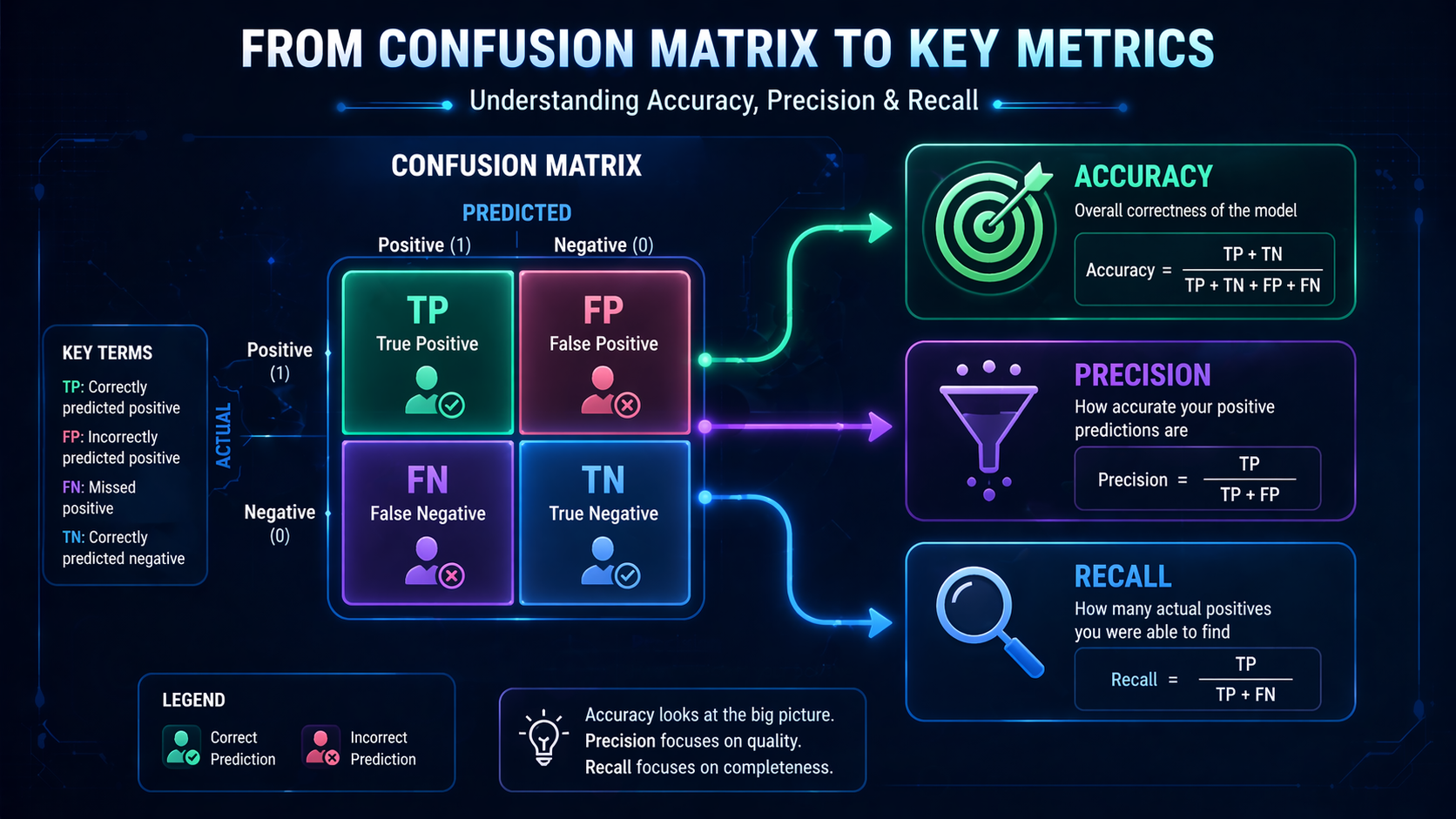

To understand these metrics deeply, we use something called a confusion matrix.

👉 (Learn more: Confusion Matrix Explained)

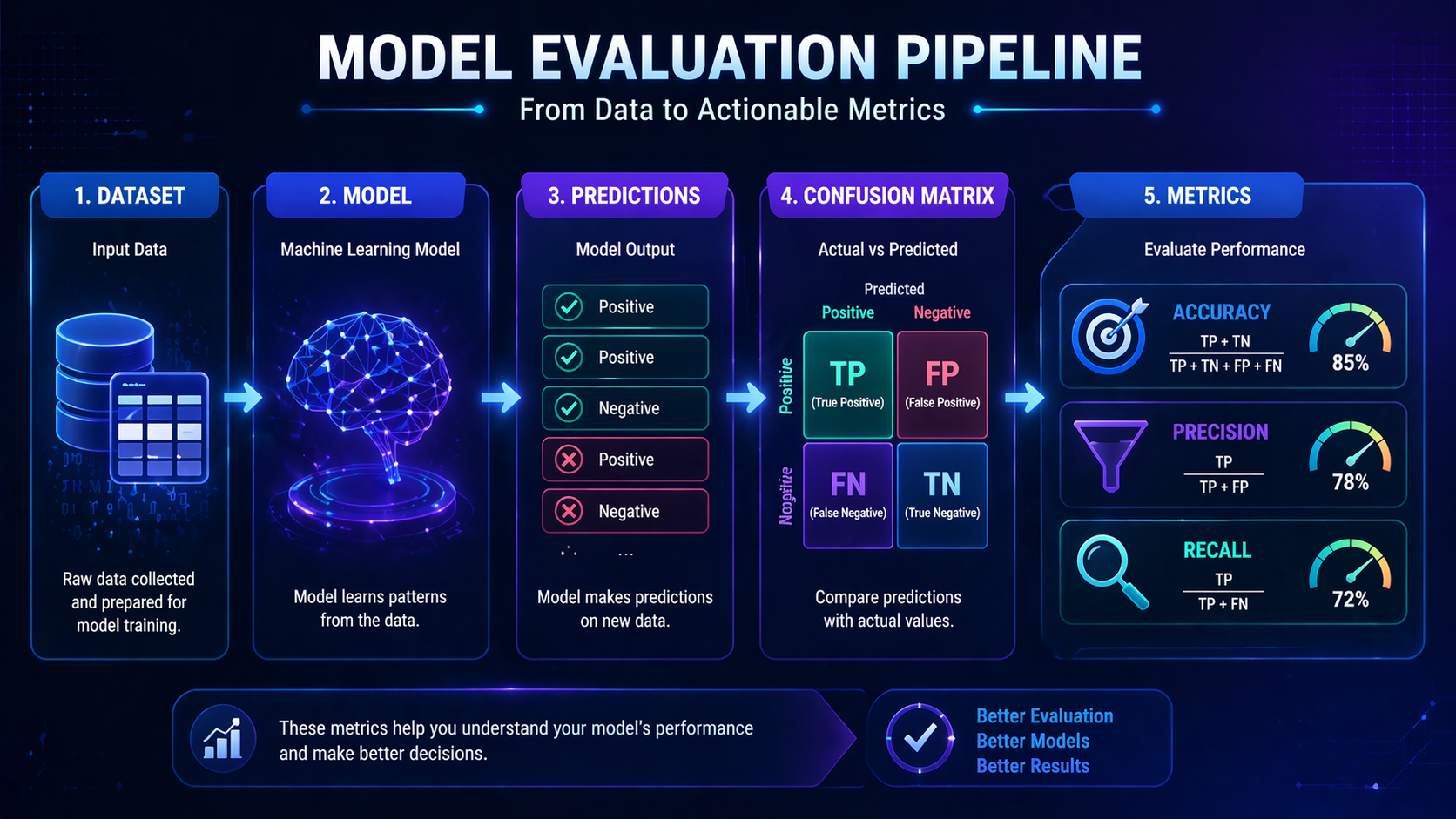

Step 1: Make Predictions

Your model predicts outcomes (e.g., spam vs not spam).

Step 2: Compare Predictions to Reality

Each prediction is checked against actual data.

Step 3: Categorize Outcomes

| Predicted Positive | Predicted Negative | |

| Actual Positive | True Positive (TP) | False Negative (FN) |

| Actual Negative | False Positive (FP) | True Negative (TN) |

Step 4: Calculate Metrics

- Accuracy → (TP + TN) / Total

- Precision → TP / (TP + FP)

- Recall → TP / (TP + FN)

👉 You don’t need to memorize formulas—just understand the meaning.

Key Concepts Beginners Must Understand

False Positives vs False Negatives

- False Positive (FP): Model says YES, but it’s wrong

- False Negative (FN): Model says NO, but it’s wrong

👉 These errors matter differently depending on the situation.

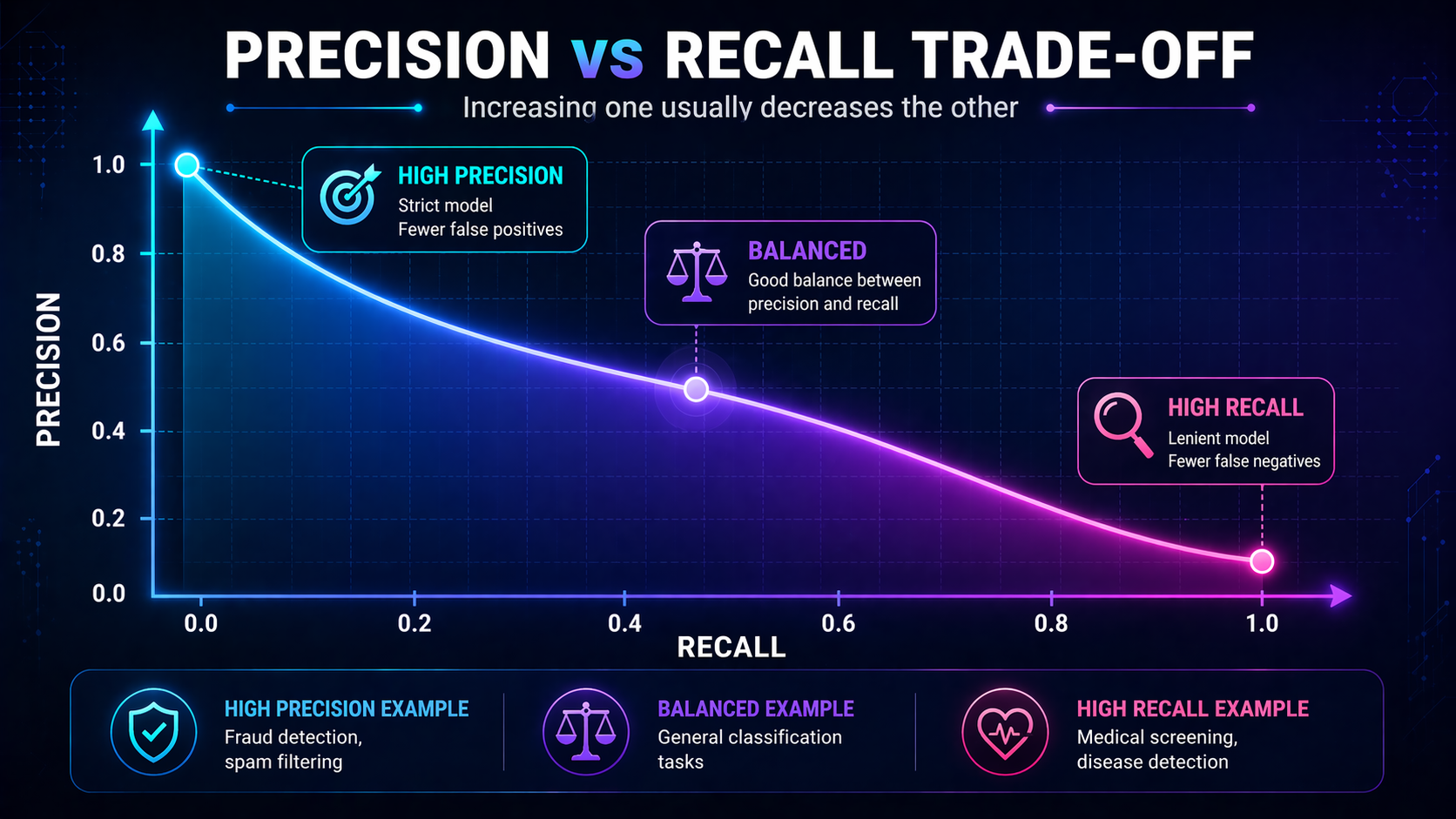

The Precision vs Recall Trade-Off

👉 Improving one often reduces the other.

- High precision → fewer false positives

- High recall → fewer false negatives

Imbalanced Datasets (Critical Insight)

👉 This is where beginners get misled.

If 95% of emails are not spam:

- A model that always predicts “not spam” = 95% accuracy

But:

- Precision = 0

- Recall = 0

👉 Looks good. Performs terribly.

Context Is Everything

There is no “best” metric.

👉 The right metric depends on your goal.

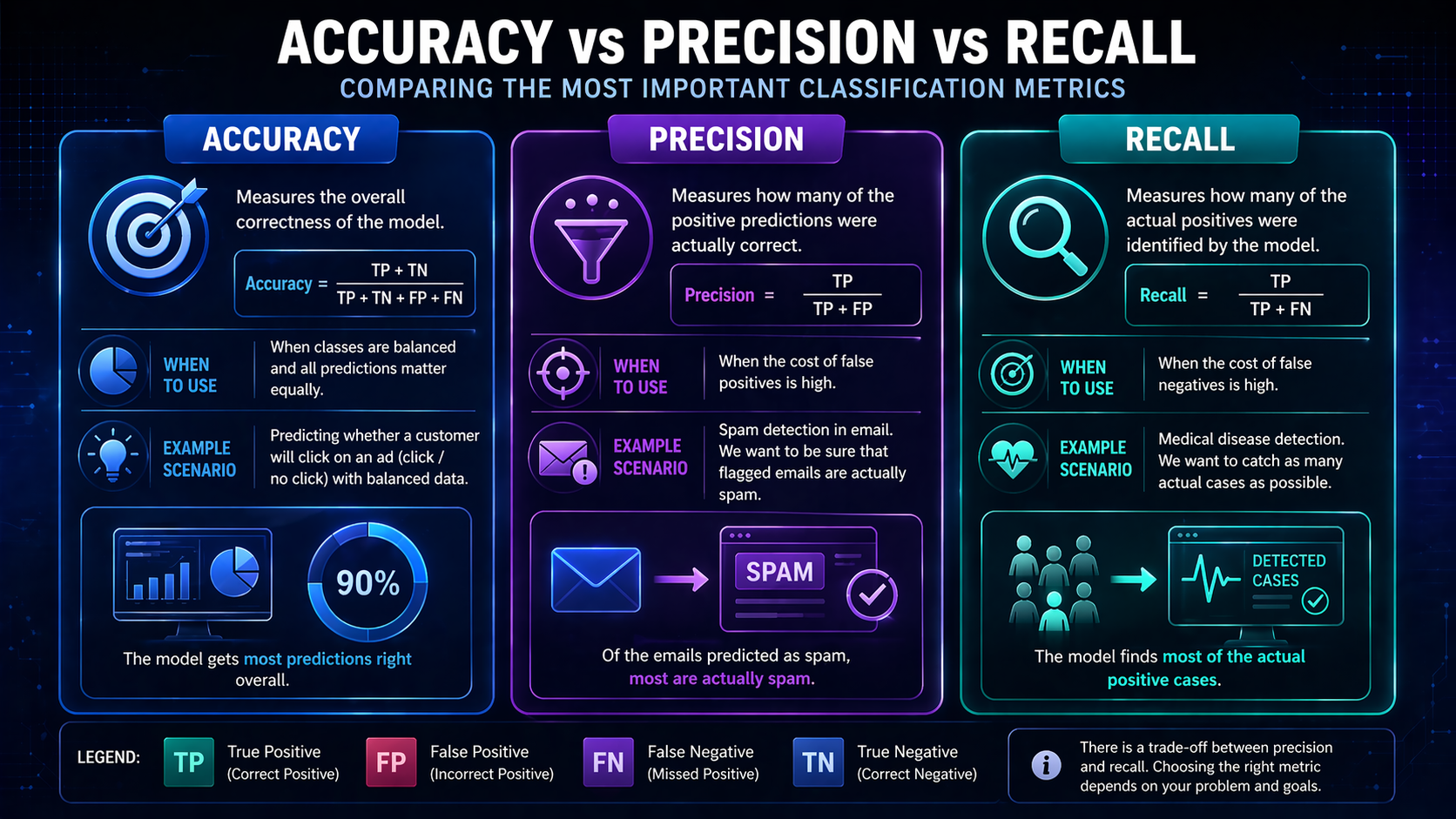

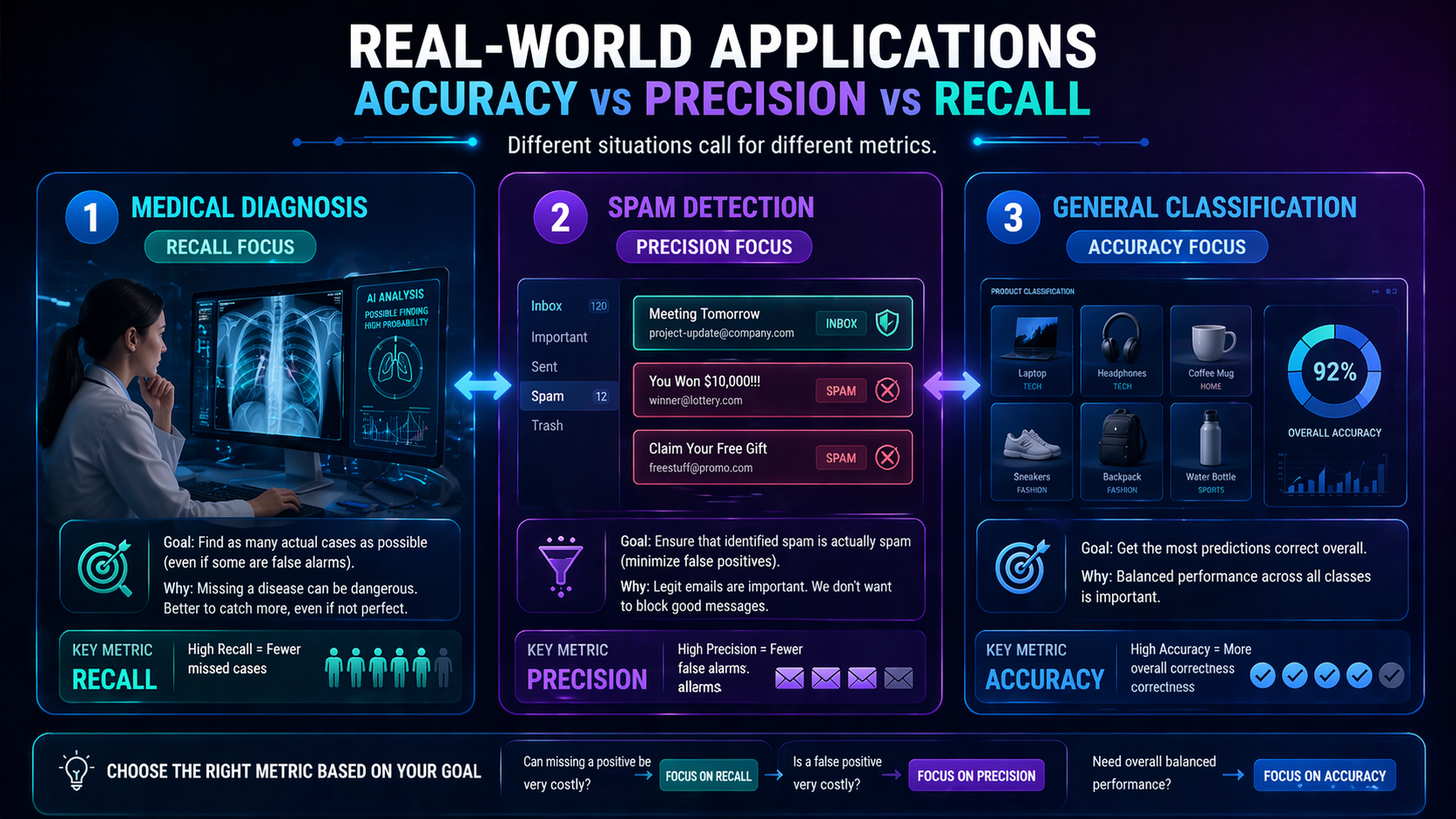

When Should You Use Each Metric? (Decision Framework)

This is where everything clicks 👇

| Scenario | Best Metric | Why |

| Spam detection | Precision | Avoid marking real emails as spam |

| Disease detection | Recall | Don’t miss real cases |

| Fraud detection | Balance | Both errors are costly |

| Balanced datasets | Accuracy | Works well when classes are equal |

👉 This section alone makes the concept practical.

The Missing Piece: What About F1 Score?

What if you need both precision and recall?

👉 That’s where F1 Score comes in.

- Combines precision and recall into one metric

- Useful when both types of errors matter

👉 Learn more: F1 Score Explained

Real-World Applications

Spam Detection

- Focus: Precision

- Why: Avoid losing important emails

Medical Diagnosis

- Focus: Recall

- Why: Missing a disease can be life-threatening

Fraud Detection

- Focus: Balance

- Why: False alarms vs missed fraud

Search Engines

- Precision → relevant results

- Recall → finding all relevant pages

Advantages and Limitations

Advantages

- Easy to understand and interpret

- Provide deeper insight than accuracy alone

- Essential for classification tasks

- Help tailor models to real-world needs

Limitations

- Accuracy can be misleading with imbalanced data

- Precision and recall don’t show the full picture alone

- Trade-offs can be difficult to balance

- Often need F1 Score or ROC-AUC for deeper evaluation

Comparison With Related Concepts

Accuracy vs F1 Score

- Accuracy → overall correctness

- F1 Score → balance between precision and recall

Precision vs Recall

- Precision → correctness of positive predictions

- Recall → completeness of detection

Connection to Machine Learning Topics

These metrics are core to:

- Model Evaluation Metrics Explained

- Supervised Learning Explained

- Neural Networks Explained

- Deep Learning Explained

They also connect to foundational topics like:

- Artificial Intelligence Explained

- Machine Learning Explained

- Unsupervised Learning Explained

- Reinforcement Learning Explained

Real-World Workflow Example

Let’s walk through a practical case:

Fraud Detection System

- Train model on labeled data

- Make predictions

- Build confusion matrix

- Calculate precision and recall

- Adjust based on business needs

👉 If missing fraud is risky → increase recall

👉 If false alerts are costly → increase precision

Future Outlook

As AI systems evolve:

- Models will optimize multiple metrics automatically

- Context-aware evaluation will become standard

- Ethical AI will include fairness metrics

- Real-time model evaluation will improve decision-making

Organizations like:

…are actively developing better evaluation frameworks.

FAQ: Accuracy vs Precision vs Recall

What is the main difference between accuracy vs precision vs recall?

Accuracy measures overall correctness, precision measures how many predicted positives are correct, and recall measures how many actual positives are detected.

Why is accuracy not always enough?

Because it can be misleading in imbalanced datasets where one class dominates.

What is the difference between precision and recall?

Precision focuses on correctness of positive predictions, while recall focuses on capturing all actual positive cases.

When should I prioritize precision?

Use precision when false positives are costly, such as in spam detection.

When should I prioritize recall?

Use recall when missing important cases is dangerous, such as in medical diagnosis.

Can a model have high accuracy but poor precision and recall?

Yes, especially in imbalanced datasets where most predictions belong to one class.

What is a false positive in machine learning?

A false positive is when the model incorrectly predicts a positive result.

What is a false negative in machine learning?

A false negative is when the model fails to detect a real positive case.

What is F1 score and why is it used?

F1 score combines precision and recall into a single metric to balance both types of errors.

Which is better: accuracy vs precision vs recall?

No single metric is always best—it depends on the problem and the cost of errors.

Conclusion

Understanding accuracy vs precision vs recall is essential for evaluating machine learning models effectively.

Each metric tells a different story:

- Accuracy → overall performance

- Precision → how reliable predictions are

- Recall → how complete detection is

👉 The real skill is knowing which one matters most for your specific problem.

Recommended Next Topics

To build a complete understanding, explore:

- Model Evaluation Metrics Explained

- Confusion Matrix Explained

- F1 Score Explained

- ROC Curve and AUC Explained

- Bias vs Variance Tradeoff