Introduction

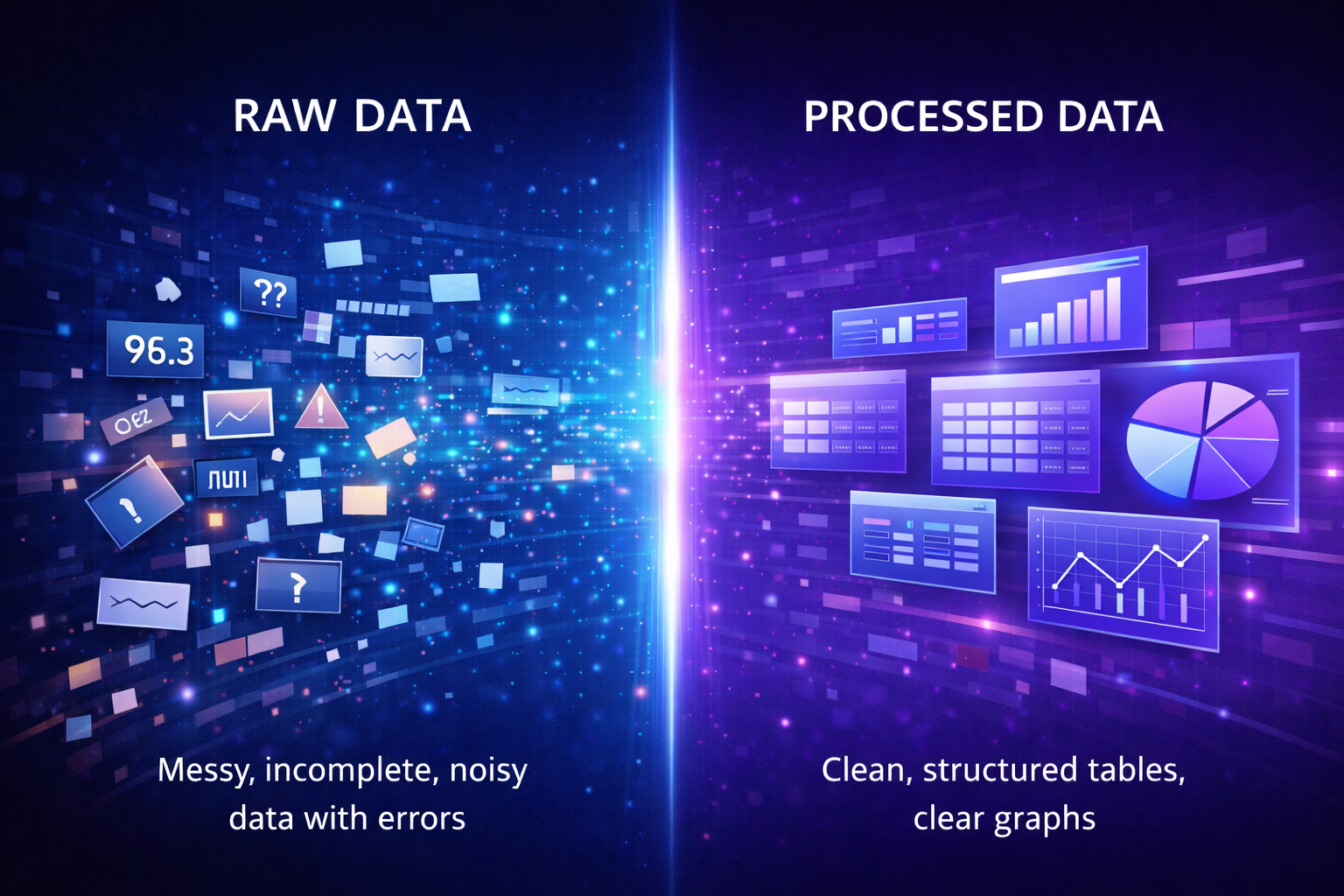

Before any machine learning model can learn, it needs clean, structured, and meaningful data. However, real-world data is rarely perfect—it often contains missing values, errors, inconsistencies, and noise.

This is where data preprocessing becomes essential.

Think of it like preparing ingredients before cooking. If your ingredients are messy or spoiled, the final dish won’t turn out well. In the same way, if your data is unclean or poorly formatted, even the most advanced AI models will struggle to perform.

Data preprocessing is an essential step in machine learning and artificial intelligence because clean, organized data improves model accuracy, training quality, and prediction performance.

In this guide, you’ll learn:

- What data preprocessing is

- Why it matters

- How it works step-by-step

- Key techniques and concepts

- Real-world applications

- Advantages and limitations

Data Preprocessing Explained

Data preprocessing is the process of cleaning, organizing, and transforming raw data into a structured format that machine learning models can understand. It improves data quality, removes errors, and ensures models can learn accurate patterns from the data.

Raw data can come from many sources:

- Databases

- Sensors and IoT devices

- Websites and APIs

- User inputs

- Logs and transaction records

However, this data is often:

- Incomplete (missing values)

- Noisy (errors or outliers)

- Inconsistent (different formats)

Data preprocessing fixes these issues so models can learn meaningful patterns.

👉 This step is essential across all AI systems, including Deep Learning Explained and Neural Networks Explained, where large volumes of data must be properly prepared.

Why Data Preprocessing Is Important

Machine learning models rely heavily on the quality of the data they are trained on.

If the data is poor:

- The model learns incorrect patterns

- Predictions become unreliable

- Performance decreases significantly

Key Benefits:

- Improves model accuracy

- Reduces noise and errors

- Speeds up training time

- Helps models generalize better to new data

Real Example

Imagine training a model to predict house prices:

- If some prices are missing → predictions become inaccurate

- If some values are extremely high due to errors → model gets confused

Preprocessing ensures the model learns from clean, realistic data.

How Data Preprocessing Works (Step-by-Step)

1. Data Collection

Data is gathered from multiple sources.

Examples:

- Customer purchase data from an e-commerce site

- Images for a computer vision model

- Text data for chatbots

2. Data Cleaning

This step fixes errors and inconsistencies.

Common tasks:

- Removing duplicate entries

- Fixing incorrect values

- Handling missing data

Example:

- Replacing missing ages with the average age

- Removing corrupted records

3. Data Transformation

Data is converted into a format that models can understand.

Examples:

- Converting text into numerical values

- Scaling features to similar ranges

- Encoding categories (e.g., “Yes/No” → 1/0)

Real Example

If one feature is income ($100,000) and another is age (30), the model may prioritize income simply because the number is larger. Scaling ensures all features are treated fairly.

4. Data Reduction

Large datasets are simplified while keeping important information.

Techniques include:

- Removing irrelevant features

- Dimensionality reduction

This improves efficiency without sacrificing performance.

5. Data Splitting

Data is divided into:

- Training data

- Testing data

This ensures the model is evaluated properly on unseen data.

👉 Learn more in Training vs Testing Data

6. Feature Engineering

New features are created to improve model performance.

Examples:

- Converting “date” into “day of week”

- Grouping ages into categories

- Extracting keywords from text

Feature engineering helps models learn better patterns, not just more data.

👉 Learn more in Feature Engineering Explained

Key Concepts Beginners Must Understand

Missing Data

Sometimes datasets are incomplete.

Solutions:

- Remove rows with missing values

- Fill missing values using averages or predictions

Outliers

Outliers are unusual values that can distort results.

Example:

- A salary of $1,000,000 in a dataset of average workers

Handling outliers improves model stability.

Normalization vs Standardization

| Method | Description | Example |

| Normalization | Scales values between 0 and 1 | Pixel values in images |

| Standardization | Centers data around mean | Financial data |

Encoding Categorical Data

Machines cannot understand text directly.

So categories are converted into numbers:

- One-hot encoding

- Label encoding

Feature Selection

Selecting the most important variables for the model.

Benefits:

- Reduces complexity

- Improves performance

- Prevents overfitting

👉 Related concept: Overfitting vs Underfitting

Types of Data Preprocessing

Data Cleaning

Fixing errors and inconsistencies.

Data Integration

Combining data from multiple sources into one dataset.

Data Transformation

Changing formats, scaling, and encoding data.

Data Reduction

Reducing dataset size while preserving important information.

Data Discretization

Converting continuous data into categories.

Example:

- Age → “Young”, “Adult”, “Senior”

Real-World Applications of Data Preprocessing

Healthcare

- Cleaning patient records

- Handling missing medical data

- Preparing datasets for disease prediction

Finance

- Fraud detection systems rely on clean transaction data

- Removing anomalies and inconsistencies

E-commerce

- Preparing customer data for recommendation systems

- Encoding user behavior patterns

Self-Driving Cars

- Processing sensor data

- Cleaning image and video inputs

👉 These systems rely heavily on Deep Learning and Neural Networks for decision-making.

Advantages of Data Preprocessing

- Improves model accuracy

- Reduces noise and inconsistencies

- Speeds up training time

- Enhances feature quality

- Helps models generalize better

Limitations of Data Preprocessing

- Time-consuming process

- Requires domain knowledge

- Risk of removing useful data

- Can introduce bias if done incorrectly

Data Preprocessing vs Related Concepts

Data Preprocessing vs Feature Engineering

| Aspect | Data Preprocessing | Feature Engineering |

| Goal | Clean and prepare data | Improve features |

| Focus | Data quality | Model performance |

| Example | Filling missing values | Creating new variables |

Summary: Preprocessing ensures clean data, while feature engineering makes that data more useful for learning.

Data Preprocessing vs Data Cleaning

- Data cleaning is just one step

- Data preprocessing includes cleaning, transformation, and more

Data Preprocessing vs Data Augmentation

- Preprocessing improves existing data

- Augmentation creates new data (common in deep learning systems)

Data preprocessing often works together with feature engineering, training data preparation, and neural networks to improve machine learning performance.

Future of Data Preprocessing

As AI evolves, preprocessing is becoming more automated.

Future trends include:

- Automated data cleaning tools

- AI-driven feature engineering

- Real-time preprocessing pipelines

- Integration with large-scale AI systems

According to IBM’s guide on data preprocessing, data quality remains one of the most critical factors in AI success.

Stanford’s machine learning research also highlights that better data often matters more than more complex models.

Data Preprocessing Explained (FAQ)

1. Why is data preprocessing important?

Data preprocessing is important because machine learning models rely on clean, structured data to learn accurate patterns. Without preprocessing, models may learn incorrect relationships, leading to poor predictions and unreliable results.

2. What happens if you skip data preprocessing?

If you skip data preprocessing, the model may train on messy or inconsistent data, causing it to learn incorrect patterns. This often leads to low accuracy, poor generalization, and unreliable predictions in real-world applications.

3. Is data preprocessing always required in machine learning?

Yes, data preprocessing is almost always required in machine learning. Real-world data is rarely clean, so preprocessing ensures that the data is usable, consistent, and suitable for training models effectively.

4. What is the most important step in data preprocessing?

Data cleaning is often the most important step in data preprocessing because it removes errors, missing values, and inconsistencies. Clean data provides a strong foundation for all other steps in the machine learning pipeline.

5. What tools are used for data preprocessing?

Common tools used for data preprocessing include:

- Python (Pandas and NumPy for data handling)

- Scikit-learn (for preprocessing techniques like scaling and encoding)

- TensorFlow (for deep learning data pipelines)

These tools are widely used in both Machine Learning and Deep Learning workflows.

6. What is normalization in data preprocessing?

Normalization is a data preprocessing technique that scales numerical values to a standard range, usually between 0 and 1. This helps machine learning models treat all features equally, especially when values vary widely.

7. What is feature engineering?

Feature engineering is the process of creating new features from existing data to improve model performance. It is closely related to preprocessing and plays a key role in building better machine learning models (see Feature Engineering Explained).

8. Can data preprocessing introduce bias?

Yes, data preprocessing can introduce bias if data is altered incorrectly or important information is removed. For example, removing too many outliers or filling missing values poorly can distort the dataset and affect model fairness.

9. How long does data preprocessing take?

Data preprocessing can take a significant portion of a machine learning project—often around 60–80% of the total time. This is because cleaning and preparing data is usually more complex than building the model itself.

10. Is data preprocessing different in deep learning?

Yes, data preprocessing in deep learning often involves larger datasets and additional steps like data augmentation. Deep learning models, such as those used in Neural Networks Explained, require well-prepared data to perform effectively.

Explore More Data & Machine Learning Guides

If you want to continue learning about data preprocessing and machine learning systems, explore these beginner-friendly guides covering datasets, feature engineering, neural networks, and AI model optimization.

Artificial Intelligence Foundations

👉 Artificial Intelligence Explained

Data & Training

👉 What Is a Dataset in Machine Learning

👉 Feature Engineering Explained

👉 Feature Selection vs Feature Extraction

Neural Networks & Deep Learning

👉 Deep Learning vs Machine Learning

Model Evaluation & Optimization

👉 Model Evaluation Metrics Explained

👉 Accuracy vs Precision vs Recall

These guides will help you build a stronger understanding of data preprocessing systems and modern artificial intelligence technologies.

Conclusion

Data preprocessing is one of the most important steps in any AI or machine learning workflow. It transforms raw data into a clean, structured format that models can learn from effectively.

Without it, even the most advanced algorithms will fail to produce reliable results.

As you continue your journey, mastering preprocessing will give you a strong foundation for building powerful AI systems.