What Is the Bias vs Variance Tradeoff?

The bias vs variance tradeoff is a core concept in machine learning that describes the balance between a model that is too simple (high bias) and one that is too complex (high variance). The goal is to find a model that generalizes well to new data by minimizing both bias and variance.

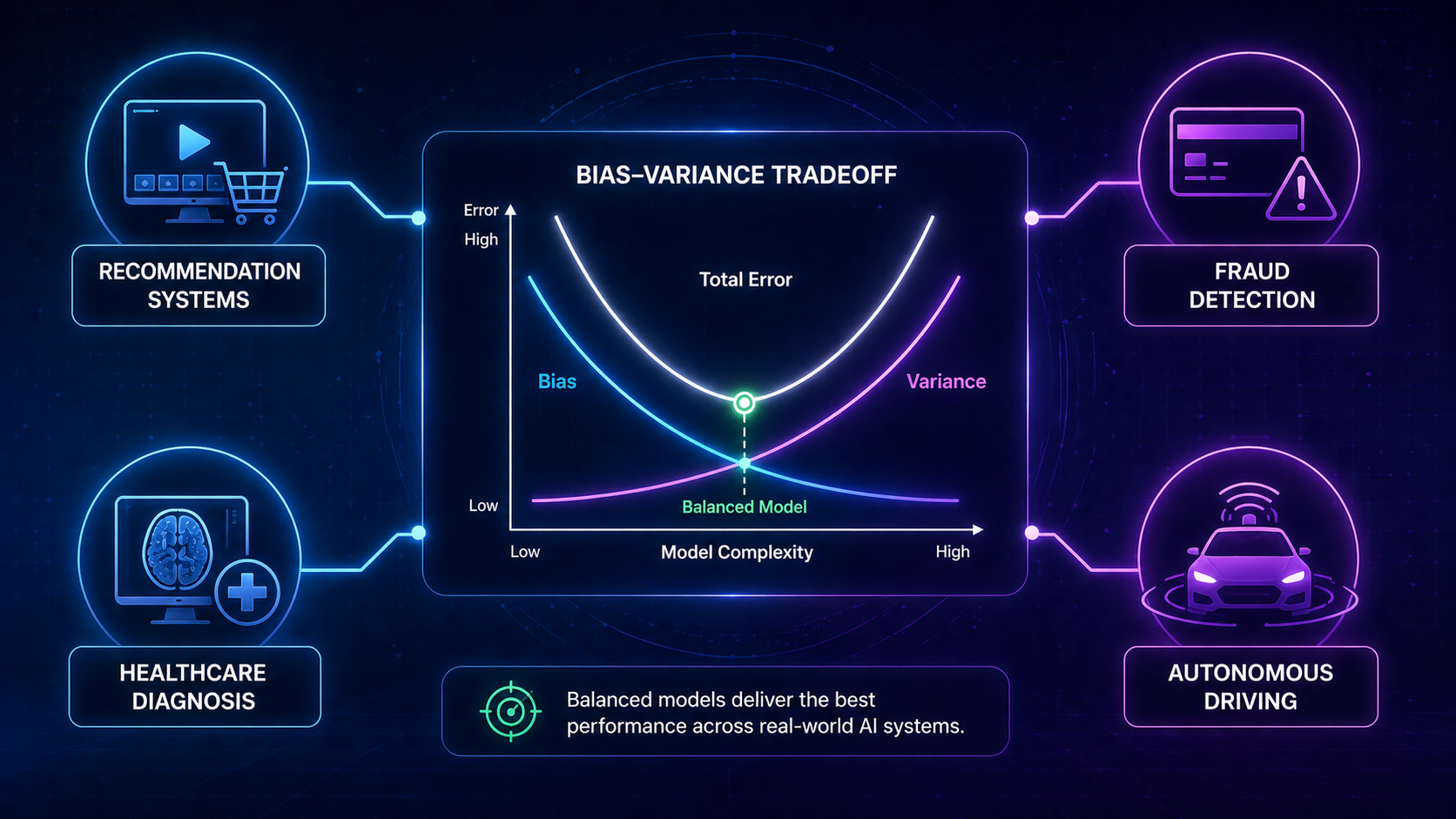

Why the Bias vs Variance Tradeoff Matters in AI

If you remember only one thing from this article, remember this:

👉 The best model is not the simplest or the most complex—it’s the one that generalizes best.

In real-world AI systems:

- Too much bias → your model misses important patterns

- Too much variance → your model breaks on new data

This balance directly impacts:

- Medical diagnoses

- Fraud detection systems

- Self-driving cars

- Recommendation engines

👉 In short: this concept determines whether AI works or fails in the real world

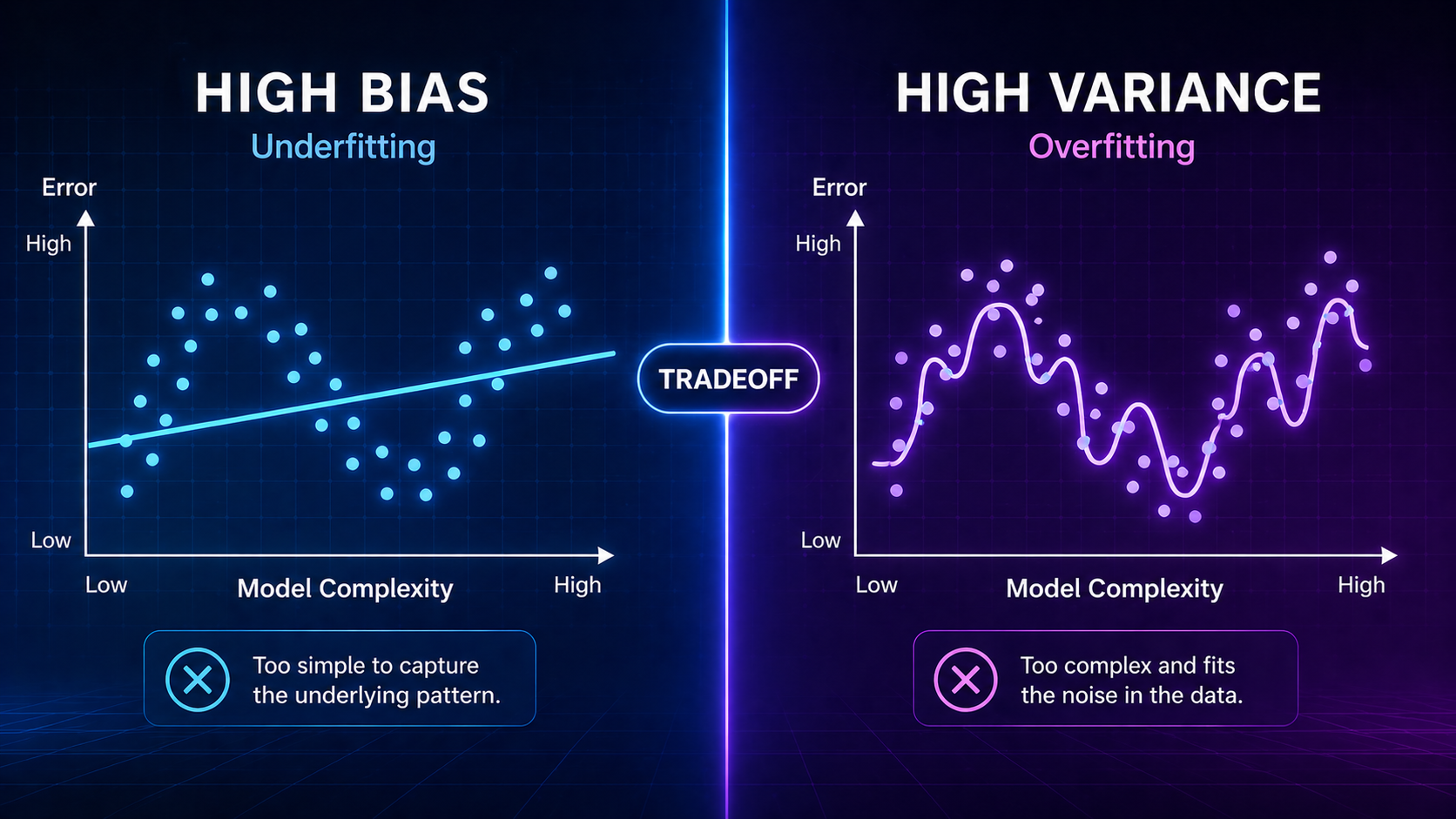

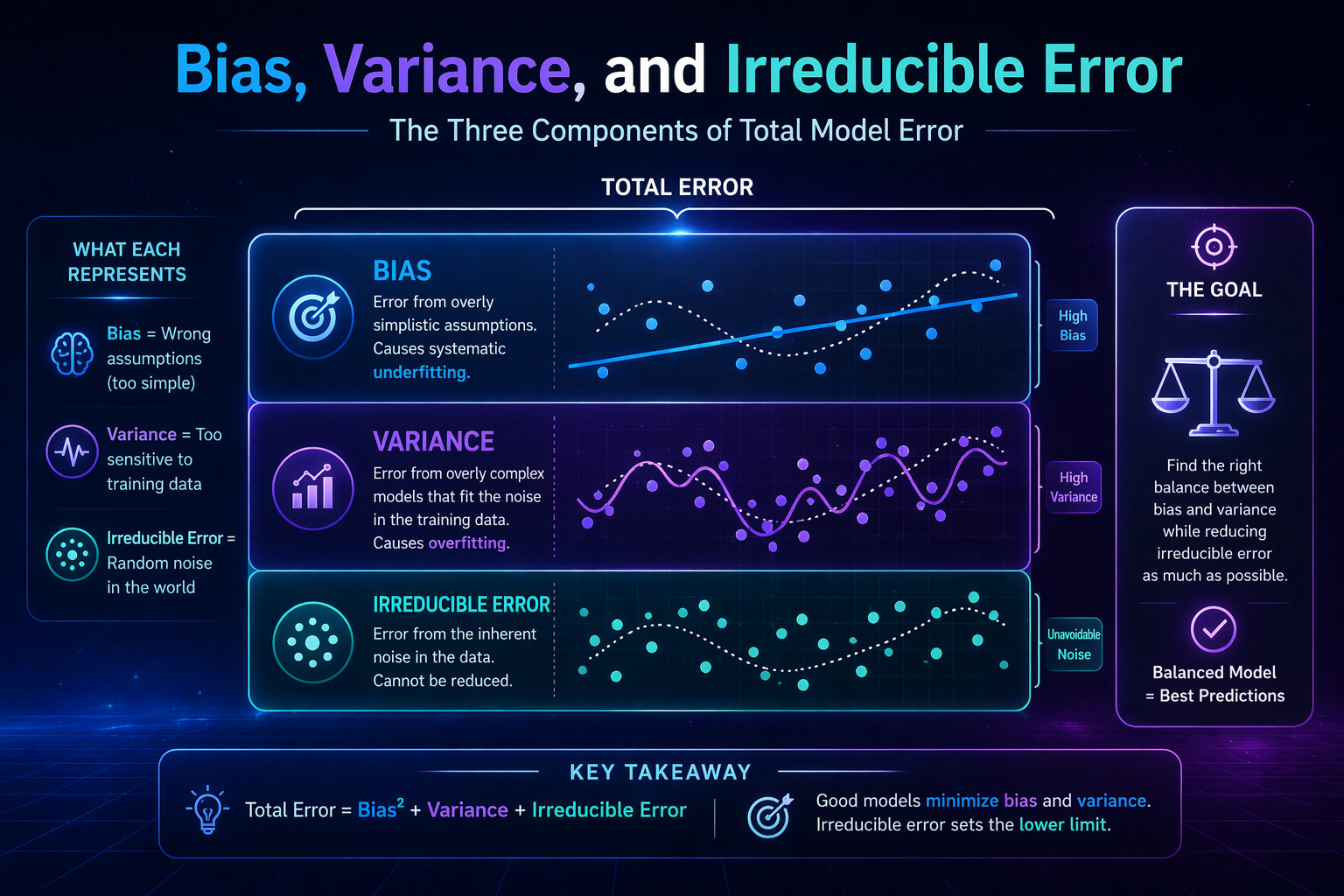

What Is Bias in Machine Learning?

Simple Definition

Bias is the error caused by overly simple assumptions in a machine learning model.

What High Bias Looks Like

A high-bias model:

- Is too simple

- Ignores important patterns

- Performs poorly on both training and testing data

👉 This leads to underfitting

Real-World Example

Imagine predicting house prices using only square footage.

- You ignore location, condition, and market trends

- The model becomes overly simplistic

👉 Result: inaccurate predictions across all scenarios

What Is Variance in Machine Learning?

Simple Definition

Variance is how much a model’s predictions change when trained on different datasets.

What High Variance Looks Like

A high-variance model:

- Learns training data too closely

- Captures noise instead of real patterns

- Performs well on training data but poorly on new data

👉 This leads to overfitting

Real-World Example

Think of memorizing answers for a test:

- You do great on familiar questions

- You fail when questions change slightly

👉 Result: poor real-world performance

The Core Mental Model (Easy Way to Remember)

👉 Bias = Assumptions

👉 Variance = Sensitivity

👉 Tradeoff = Balance

Or even simpler:

- High bias → “Too simple”

- High variance → “Too complex”

- Balanced → “Just right”

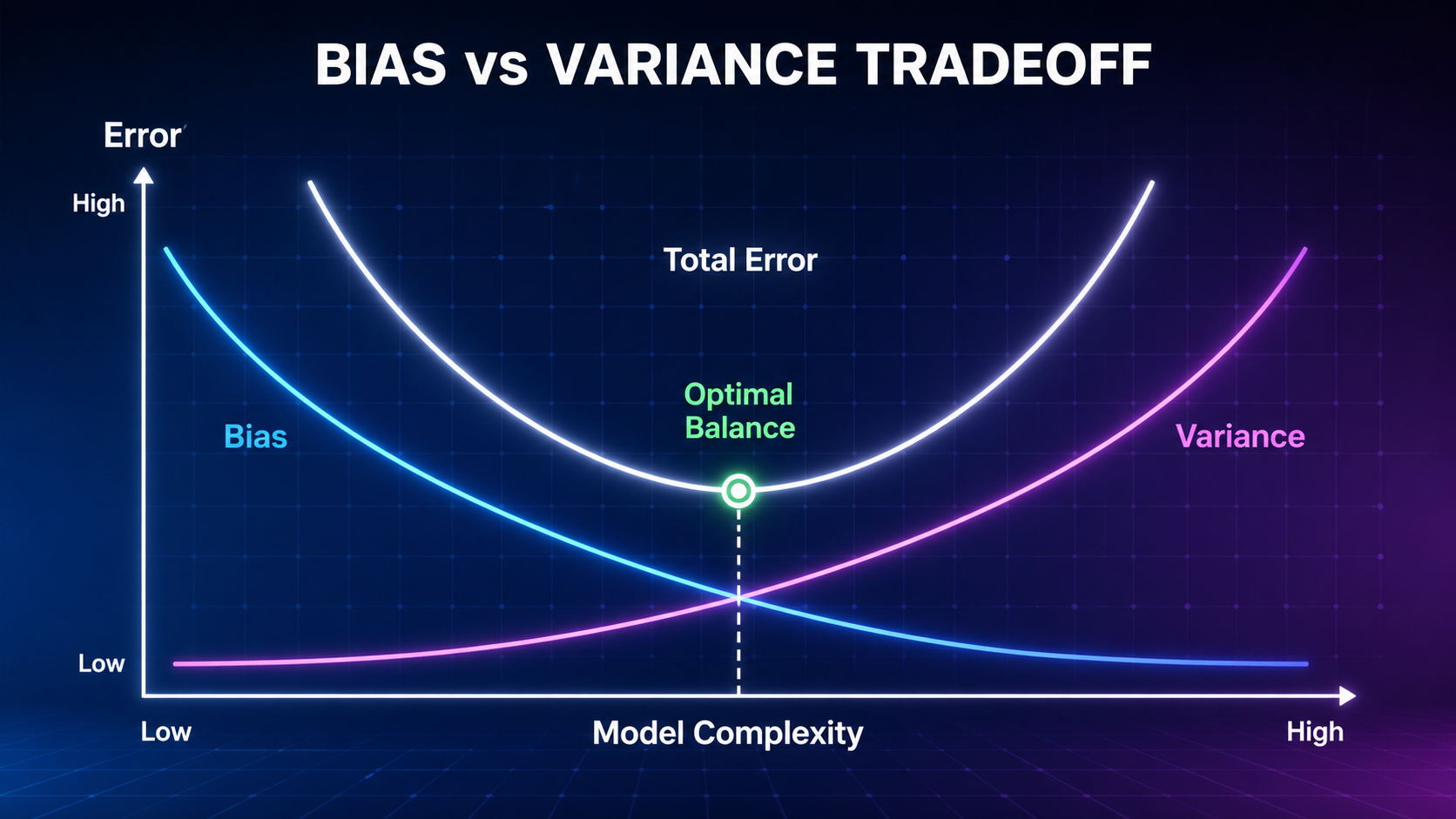

How the Bias vs Variance Tradeoff Works

Step-by-Step Explanation

- Start with a simple model

- High bias, low variance

- Underfits the data

2. Increase model complexity

- Bias decreases

- Variance increases

3. Reach optimal balance

- Lowest total error

- Best performance on new data

Visual Analogy (Dartboard)

| Scenario | What Happens |

| High Bias | Darts cluster far from the center |

| High Variance | Darts spread out randomly |

| Balanced | Darts cluster tightly at the center |

👉 The goal is accuracy AND consistency

Key Concepts You Must Understand

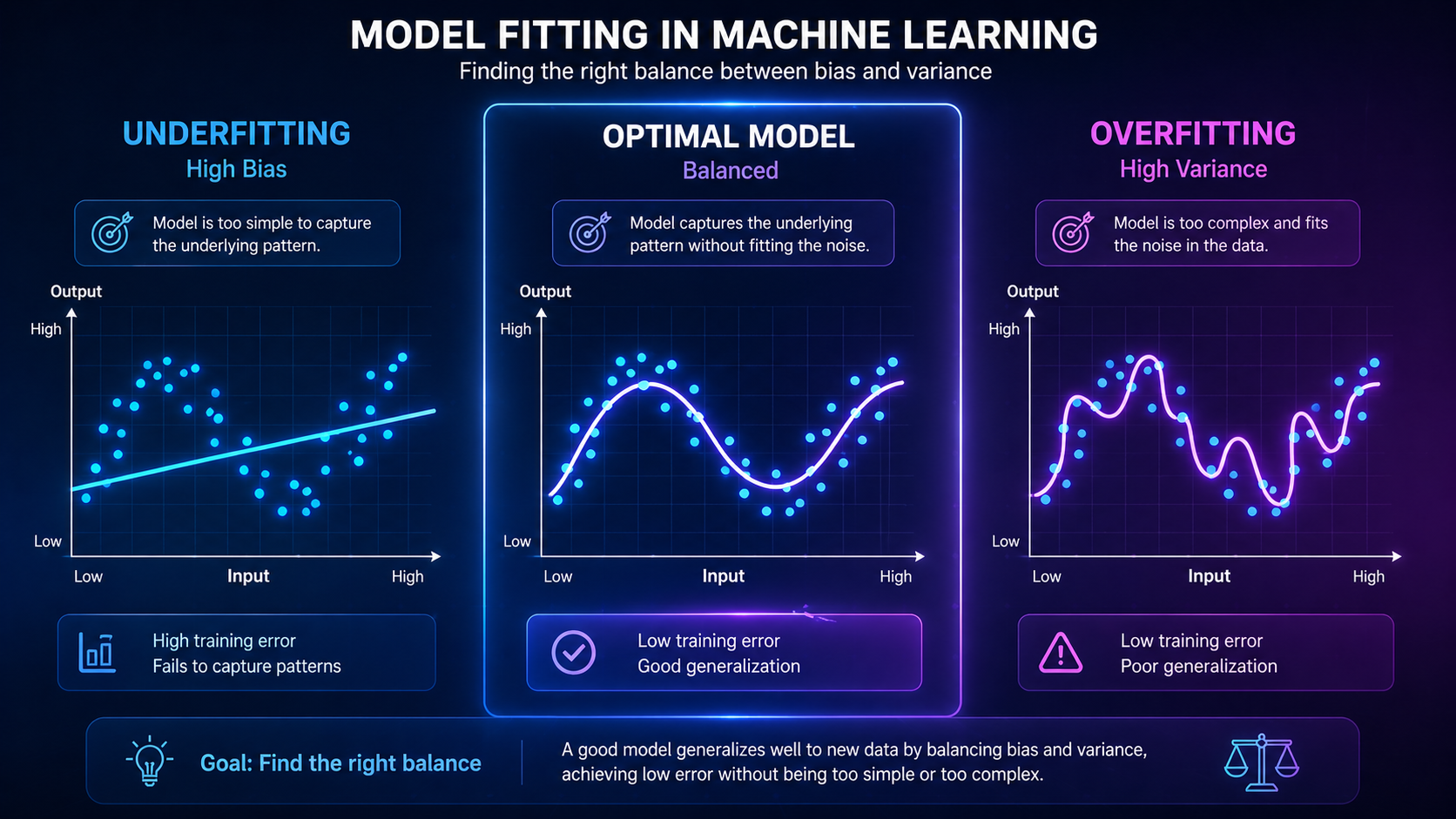

1. Overfitting vs Underfitting

- Underfitting = High bias

- Overfitting = High variance

👉 Learn more: Overfitting vs Underfitting

2. Model Complexity

| Model Type | Behavior |

| Simple models | High bias, low variance |

| Complex models | Low bias, high variance |

Examples:

- Linear regression → simpler

- Neural networks → more complex

👉 Related: Neural Networks Explained

3. Generalization

The goal of machine learning is generalization—performing well on unseen data.

👉 The bias vs variance tradeoff directly controls this.

4. Training vs Testing Performance

| Model Type | Training Error | Testing Error |

| High Bias | High | High |

| High Variance | Low | High |

| Balanced | Low | Low |

👉 Related: Training vs Testing Data

Types of Models and Their Bias-Variance Behavior

| Model | Bias | Variance |

| Linear Regression | High | Low |

| Decision Trees | Low | High |

| Random Forest | Medium | Medium |

| Neural Networks | Low | High |

👉 Choosing the right model helps control the tradeoff

Real-World Applications

1. Healthcare AI

- High bias → Missed diagnoses

- High variance → Inconsistent predictions

👉 Balanced models improve patient outcomes

2. Self-Driving Cars

- High bias → Poor understanding of environment

- High variance → Unstable decisions

3. Finance & Fraud Detection

- High bias → Missed fraud

- High variance → Too many false alerts

4. Recommendation Systems

- High bias → Generic suggestions

- High variance → Over-personalization

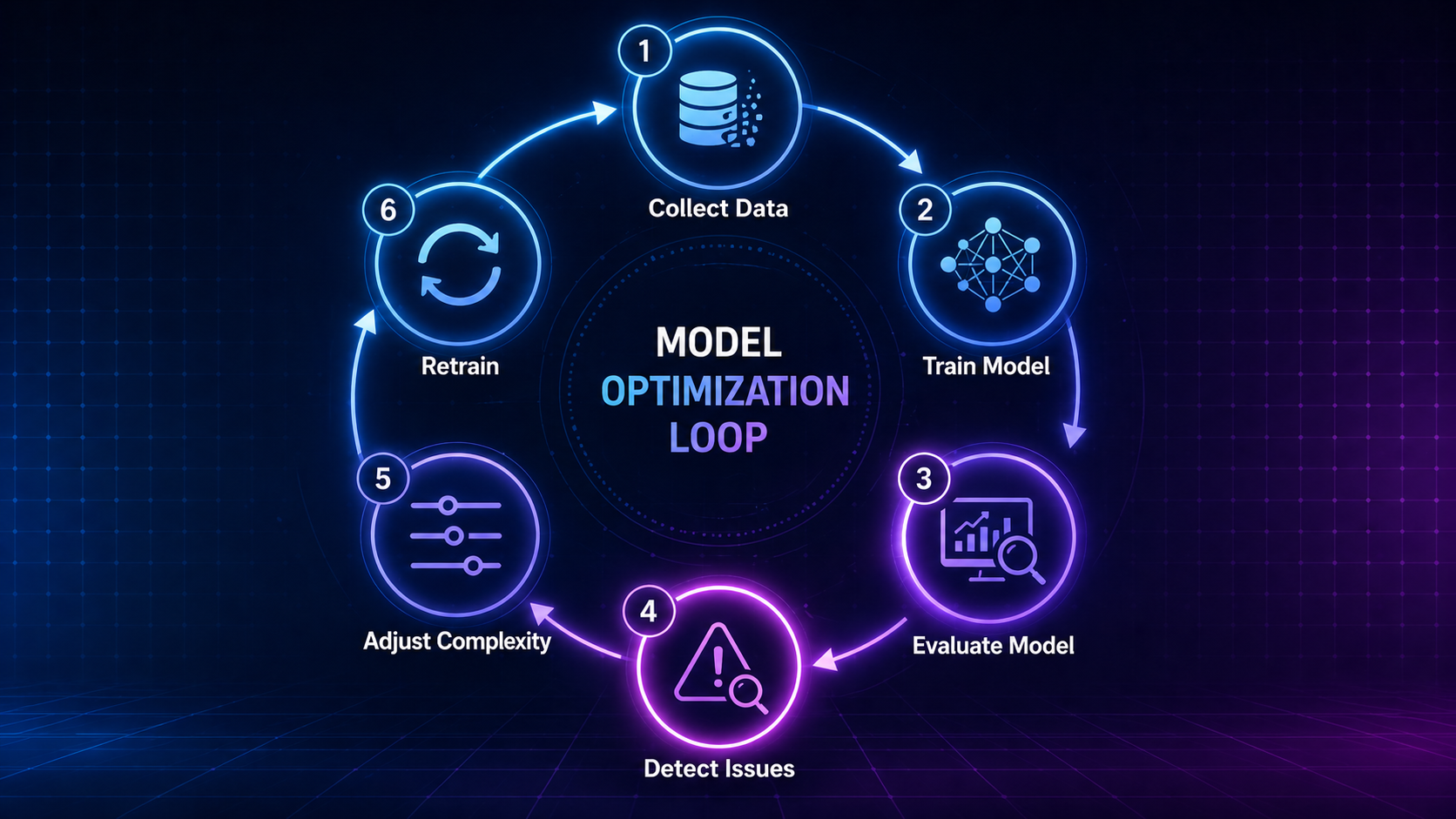

How to Reduce Bias and Variance

Reducing Bias

- Use more complex models

- Add relevant features

- Improve data quality

Reducing Variance

- Use more training data

- Apply regularization

- Use ensemble methods (e.g., Random Forest)

- Apply cross-validation

Bias vs Variance vs Related Concepts

| Concept | Meaning |

| Bias | Error from assumptions |

| Variance | Error from sensitivity |

| Overfitting | High variance problem |

| Underfitting | High bias problem |

| Noise | Random data errors |

External Resources for Deeper Learning

To explore further:

- Learn more from IBM’s guide on the bias-variance tradeoff

- Explore Google’s Machine Learning Crash Course

Future Outlook of the Bias vs Variance Tradeoff

As AI evolves:

- AutoML tools will automatically balance models

- Deep learning will improve generalization

- Better data pipelines will reduce variance

However:

👉 The bias vs variance tradeoff will always remain a foundational concept in AI

FAQ: Bias vs Variance Tradeoff

What is the bias vs variance tradeoff in simple terms?

The bias vs variance tradeoff is the balance between underfitting (high bias) and overfitting (high variance) in machine learning models.

Why is the bias vs variance tradeoff important?

The bias vs variance tradeoff determines how well a model performs on new, unseen data, which is critical for real-world AI systems.

What is the difference between bias and variance?

Bias refers to errors caused by overly simple assumptions, while variance refers to errors caused by a model being too sensitive to training data.

What causes high bias?

High bias is caused by overly simple models that ignore important patterns in the data, leading to underfitting.

What causes high variance?

High variance is caused by overly complex models that learn noise from training data, leading to overfitting.

Is overfitting related to variance?

Yes, overfitting occurs when a model has high variance and learns noise instead of true patterns.

Is underfitting related to bias?

Yes, underfitting occurs when a model has high bias and fails to capture important relationships in the data.

How do you reduce the bias vs variance tradeoff in practice?

You can manage the bias vs variance tradeoff by adjusting model complexity, improving data quality, and using techniques like regularization, cross-validation, and more training data.

Does the bias vs variance tradeoff apply to deep learning?

Yes, the bias vs variance tradeoff is especially important in deep learning because complex neural networks can easily overfit data.

Can a model have both high bias and high variance?

Yes, poor model design or low-quality data can cause a model to have both high bias and high variance at the same time.

What is the goal of the bias vs variance tradeoff?

The goal is to minimize total error and achieve the best possible generalization on new, unseen data.

Why is bias vs variance important in machine learning?

The bias vs variance tradeoff is important because it directly affects a model’s ability to generalize and make accurate predictions in real-world scenarios.

Conclusion

The bias vs variance tradeoff is one of the most important concepts in machine learning.

It explains:

- Why models fail

- How to improve performance

- How to build reliable AI systems

👉 Mastering this concept helps you move from basic understanding → real-world AI expertise

Recommended Next Topics

Continue learning: